What Everyone Else Submitted to FDA on the Bayesian Guidance

The comment period for FDA's draft guidance on Bayesian methodology in clinical trials of drugs and biologics closed on March 13, 2026. I wrote about my own submission a few weeks ago. FDA guidance often shapes trial design years before it is finalized, so the public comments on a draft like this one are worth paying attention to. They reveal where the real tensions live.

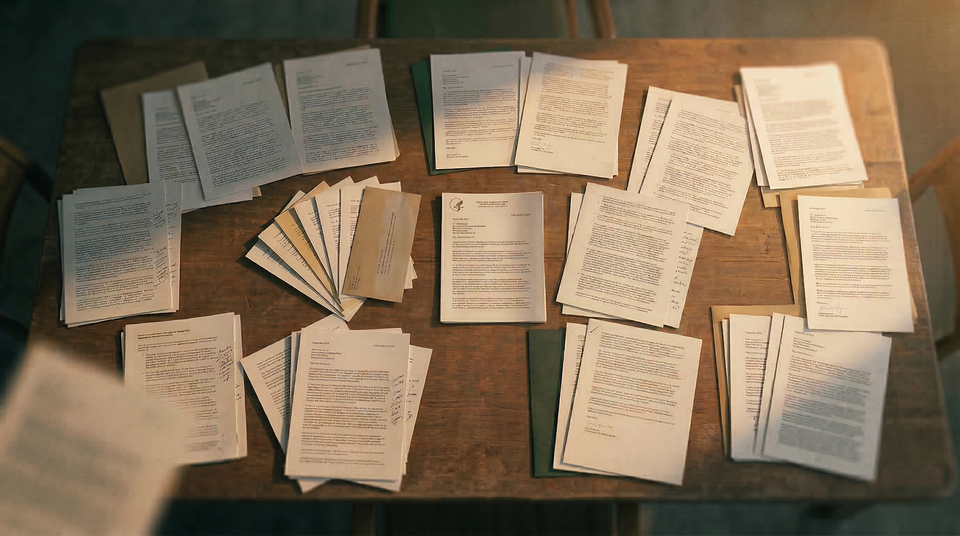

I downloaded every comment with an attachment as they appeared on regulations.gov. The docket contains 53 submissions in total, from individual academics to major pharma companies to professional societies. The central divide is philosophical: how much should Bayesian trial designs be required to satisfy frequentist operating characteristics?

But the volume of comments tells a second story: the industry wants this guidance to work, and it's telling FDA exactly where the draft falls short.

Note: Comments continued to appear after the close date. This post covers submissions posted as of March 18, 2026.

One docket, two philosophies

At one end, Elja Arjas (University of Helsinki) argues the guidance is too conservative. His position is principled and uncompromising: hybrid designs that calibrate posterior thresholds to Type I error should be avoided altogether, because they subordinate Bayesian reasoning to frequentist constraints.

He argues that prior–data conflict is not well-defined outside extreme cases, that simulation studies over candidate priors are demonstrations of Bayes' theorem rather than genuine validation, and that the distinction between design and analysis priors reflects conceptual confusion. In Bayesian thinking, there is no "uncertainty on uncertainty" distinct from ordinary uncertainty.

Arjas references his work with Gasbarra proposing False Discovery Probability as an alternative to Type I error control. This is the most theoretically pure Bayesian position in the docket.

Robert Matthews (Aston University) occupies adjacent but distinct ground. Like Arjas, he is a committed Bayesian. He calls the guidance potentially the most important advance in clinical trials since concealed randomisation. But where Arjas wants fewer frequentist constraints, Matthews wants fewer escape hatches from specifying priors.

He argues that noninformative priors should be discouraged when posterior probabilities drive regulatory decisions. The very existence of a trial implies prior belief in efficacy; a noninformative prior implies none. Discouraging them would force sponsors to formalize what is already known. Failure to do so is a major source of research waste (Chalmers & Glasziou 2009).

He also emphasizes skeptical priors, both as a counterweight to sponsor optimism and as an ethical justification for equipoise, and introduces a more novel idea: running Bayes' theorem in reverse.

Yuan Ji (University of Chicago) offers perhaps the most technically precise critique of the calibration framework. He argues against universal posterior probability thresholds (e.g., c = 0.975 or 0.95) and proposes false discovery rate (FDR) or false positive rate (FPR) as a middle ground.

He also suggests decomposing assurance into false-positive and true-positive components, and requiring reporting across multiple sample sizes. This would allow reviewers to distinguish genuine design strength from prior-driven optimism.

At the other end, Doctors for America focuses almost entirely on the risks of prior misspecification. Their comment invokes the Cardiac Arrhythmia Suppression Trial (CAST), where observational evidence suggested benefit but the randomized trial showed harm.

Their concern is cascading bias: a flawed prior informing a trial that then becomes the prior for the next trial, compounding error across a development program. They advocate triangulation with frequentist methods, propensity scoring of historical controls, and transparent sensitivity analyses. They also flag real-world data quality concerns: EMRs are optimized for billing, not clinical truth, and AI systems increasingly reflect that.

Peter Jüni and colleagues (Oxford) raise a different concern. Bayesian designs calibrated to maintain 2.5% one-sided Type I error can stop early at evidence levels that would be considered weak in a fully powered frequentist trial (roughly p ≈ 0.02 rather than p ≈ 0.001).

They also caution against interpreting posterior probabilities as positive predictive values. A calibrated Bayesian design and a frequentist design with the same nominal error rate do not necessarily produce the same evidentiary strength at decision.

My own comment sits between these poles. I argued that the guidance should explicitly name the hybrid framework already used in successful submissions: calibrating posterior thresholds to control Type I error while reporting posterior probabilities as interpretable summaries.

I also pushed for operationalizing pre-specification when borrowing priors encounter prior–data conflict, and for evaluating operating characteristics of the composed pipeline rather than individual components in isolation.

The guidance is conceptually solid but operationally thin

This was the most consistent critique across the docket, raised independently by academics, pharma companies, CROs, and professional societies.

Cogitars (a Bayesian CRO) noted missing definitions (predictive probabilities), broken links, and imbalanced treatment of dose-finding designs. Servier asked for practical guidance on prior construction and highlighted the absence of Bayesian-specific approaches to missing data.

The March 17 submissions amplified this considerably. AbbVie requested notation consistency and clearer definitions. Regeneron asked for explicit definitions of exchangeability and broader examples beyond borrowing. EMD Serono proposed a tiered documentation structure (protocol, SAP, simulation report). Lundbeck argued documentation burden should match evidentiary contribution.

EFSPI proposed decision trees for prior selection and suggested ~97.5% posterior thresholds aligned with frequentist standards. The Critical Path Institute recommended schematic workflows and conflict diagnostics. IDDI questioned why informative priors are limited to pediatrics and rare diseases.

Both Servier and a biosimilar-focused commenter flagged the lack of software validation standards. Proposed requirements included R-hat, effective sample size, trace plots, and standardized reporting templates (Prior Dossier, Simulation Report, CSR shells).

The argument is simple: without structure, submissions become inconsistent and FDA review becomes harder. I think they're right.

The volume of "please be more specific" comments, from the people who will actually implement this guidance, is itself a finding.

Greenland's table

Sander Greenland (UCLA) focused on a single issue: how to quantify prior influence.

He argues that effective sample size is insufficient and proposes a simple alternative: a table comparing percentiles (1st through 99th) of treatment effects from three sources: prior alone, data alone, and the final posterior.

This makes prior influence immediately visible. If the prior's 95th percentile is 0.8 and the data-alone 95th percentile is 0.3, the prior is doing substantial work. No ESS calculation required.

It is a characteristically Greenland proposal: conservative, transparent, and hard to argue against.

The pediatric case for Bayesian methods

Two comments made a compelling case that Bayesian methods may matter most in pediatric trials.

The American Academy of Pediatrics emphasized heterogeneity across developmental stages. Borrowing from adults may be appropriate for adolescents, but not for younger children.

The Pediatric Inclusion Alliance cited trials achieving 75–78% sample size reductions via informative priors and argued that priors may derive from mechanistic similarity, not just identical compounds.

This is where the ethical stakes are highest. Over-borrowing risks bias. Under-borrowing risks unnecessary trials. The current guidance does not provide enough structure to navigate this tradeoff.

Real regulatory use cases the guidance doesn't cover

Carl Peck (former FDA CDER Director) noted the absence of Bayesian PK/PD modeling, despite decades of use. He also argued for Bayesian approaches to bioequivalence, where data often violate log-normal assumptions.

Others pointed out that biosimilar studies require equivalence-based criteria (e.g., Pr(−δ < Δ < +δ) ≥ c), not superiority formulations.

These are real use cases. If the guidance is silent, sponsors will proceed without a shared framework and reviewers will evaluate ad hoc.

The most interesting comment you probably haven't read

Matthew Baggott (Tactogen) highlighted a specific and under-discussed issue: functional unblinding in psychoactive drug trials.

Participants correctly guess treatment assignment 85–95% of the time, introducing expectancy bias that inflates subjective outcomes by ~20–25%. If such trials inform Bayesian priors, that bias propagates forward.

His proposal: develop bias-parameter priors, clarify their relationship to skeptical priors, allow updates from blinding integrity data, require sensitivity analyses, and encourage pre-competitive development of bias inputs.

This applies far beyond psychedelics. Any setting with functional unblinding and subjective endpoints faces the same issue.

What if you run Bayes' theorem backwards?

Matthews proposes "Analysis of Credibility" (AnCred): instead of specifying a prior, solve for the prior required to make a result credible.

This reframes the problem. Rather than agreeing on a prior beforehand, stakeholders can ask whether their own beliefs would support the result.

It traces back to I.J. Good and has been developed by Matthews, Spiegelhalter, and Held. It sidesteps the prior debate by making credibility conditional and transparent.

It also aligns more closely with what regulators actually ask: is there enough evidence to believe this result?

The comment that shouldn't have been filed

The CPAC Foundation submitted a 13-page comment that is articulate and well-structured, and almost entirely content-free from a statistical perspective.

It restates general positions already reflected in the guidance without engaging specific sections or proposing changes. It reads like a generated document rather than a technical comment.

This illustrates a broader risk: as AI makes it easy to produce plausible-sounding submissions, the signal-to-noise ratio in public dockets may deteriorate.

Full Bayesianism means measuring what patients value

An anonymous comment (citing Jason Doctor et al.) argued that Bayesian decision frameworks should incorporate patient utility, not just survival or clinical endpoints.

This follows directly from Savage's axioms linking probability, belief, and utility. Whether it is practical to require this in regulatory settings is another question, but the gap is real.

What I expected to see but didn't

Two notable absences:

Platform trials. No commenter addressed Bayesian borrowing with non-concurrent controls in platform settings, a known issue.

Computational reproducibility. While software validation was discussed, no one addressed Monte Carlo variability in MCMC-based analyses. Posterior summaries themselves are random. The guidance does not define acceptable error thresholds.

The common thread

With 53 submissions, the pattern is clear: Bayesian regulatory science is past debating whether these methods belong in pivotal trials, but not yet aligned on how they should be implemented.

Foundationalists want purity. Practitioners want templates. Specialists want their use cases acknowledged. Advocacy groups want safeguards.

Two conclusions stand out.

First, "operationally thin" is now a consensus critique. FDA will need to add specificity or risk a guidance that everyone endorses and no one can implement.

Second, the strongest methodological comments push toward transparency: making prior influence visible (Greenland), clarifying evidentiary strength at decision (Jüni), choosing error metrics deliberately (Ji), and enabling post hoc credibility assessment (Matthews).

The guidance is a significant step regardless. For the first time, FDA has outlined a comprehensive framework for Bayesian methods as primary analyses in pivotal trials.

The best comments are trying to make that framework usable.

Many evidentiary problems appear during trial design, not after the analysis. I work with teams to review trial designs and run simulation studies to evaluate operating characteristics before protocols are finalized.

For consulting inquiries: maggie@zetyra.com

For more essays on statistical design and regulatory evidence, subscribe to the Evidence in the Wild newsletter.

Member discussion