The Grammar No One Reads: How Platform Trials Standardize Statistical Design

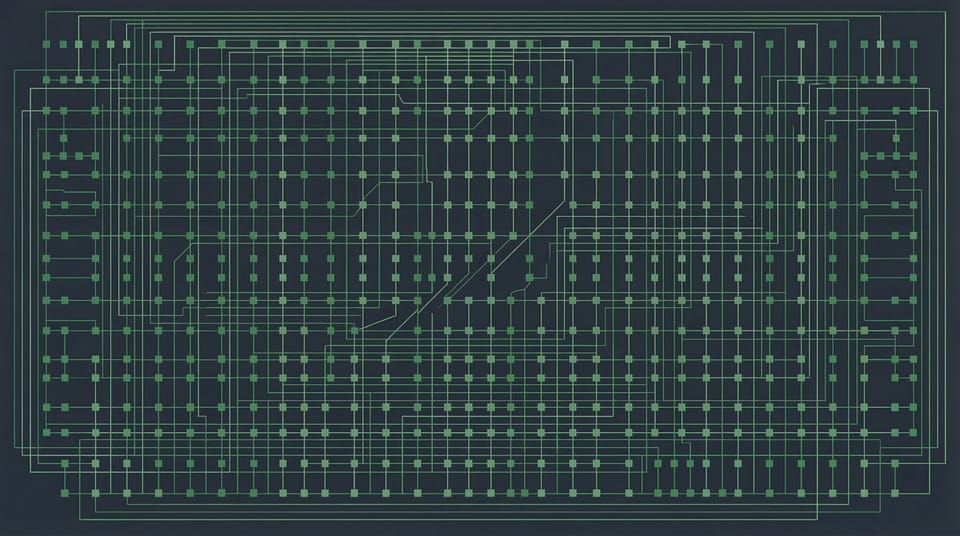

If you look at a single ComboMATCH substudy on ClinicalTrials.gov, you see a clinical trial. Two-stage design, 30 patients, objective response rate. It looks like any Phase II study.

If you look across substudies, you see something different. You see a system.

I was a statistician on two SWOG ComboMATCH substudies. One was a straightforward single-arm trial that made it to activation. The other was a three-cohort protocol with randomized and single-arm components that never enrolled a patient. Designing them taught me something that the published design papers don't emphasize: platform trials impose a shared statistical grammar on every substudy, and most of the real design work happens in the negotiation between that grammar and the specific scientific question.

What the design paper tells you

The Meric-Bernstam et al. paper in Clinical Cancer Research (2023) lays out ComboMATCH's design framework. The key structural choice is that ComboMATCH, unlike its predecessor NCI-MATCH, includes both single-arm and randomized substudies. NCI-MATCH was 39 single-arm Phase II protocols. ComboMATCH had to accommodate randomization because the core scientific question shifted from "does this targeted agent have activity?" to "does adding a second agent improve on the first?"

That shift created two distinct design templates.

For randomized substudies, the platform standardizes around a one-sided alpha of 0.10, a log-rank test for PFS, roughly 30 to 40 patients per arm, and at least 80% power to detect a hazard ratio of about 0.5. That HR corresponds roughly to doubling median PFS, which is an ambitious but defensible threshold for a signal-seeking study. Every randomized cohort includes a Wieand-type futility rule at the halfway point: if the observed HR favors the control arm at 50% of expected events, the cohort stops.

For single-arm substudies, the template is a Simon-type two-stage design with an objective response endpoint. Null response rates sit in the 10% to 30% range depending on how much single-agent activity is already established. The alternative is typically 15 to 20 percentage points higher. Maximum enrollment is 20 to 30 patients, with the option to stop for futility after the first 10 to 15.

These are the published rules. They're accurate. But they describe the grammar without explaining how it feels to write in it.

Where the grammar binds

The first thing you notice as a substudy statistician is that the parameters aren't really yours to choose. The platform's Statistical Design Development Working Group reviews every concept. If you propose a one-sided alpha of 0.05 for a randomized cohort, you'll be asked why you need tighter error control in a signal-seeking study. If you propose 90% power, you'll be asked whether the additional sample size is feasible given accrual projections. The grammar has defaults, and departing from them requires justification.

This matters more than it sounds. The difference between 80% and 85% power for a PFS endpoint with HR 0.5 might be five patients per arm. In a platform trial where every patient is screened through a central assignment algorithm, where the biomarker intersection might affect 2% of registered patients, five patients per arm is not a rounding error. It's six months of accrual.

I've been in the room where a substudy's operating characteristics changed three times between concept approval and protocol submission. The approved concept had one set of error rates. The submitted protocol had drifted. The review committee caught the drift and corrected back. Then the committee's own recalculation disagreed with ours. None of this was adversarial. It was the normal friction of fitting a specific scientific question into a standardized framework, and it's completely invisible in the final protocol document.

The second binding constraint is the accrual kill switch. Every ComboMATCH substudy has one. If enrollment falls below roughly 35% of projected accrual at one year or 50% at two years, the substudy terminates. This isn't a suggestion. It's a protocol-specified decision rule, and it exists because the platform has learned from NCI-MATCH that slow-accruing substudies rarely recover. The kill switch protects the platform's resources, but it also shapes the design: if your biomarker prevalence estimate is optimistic, you don't just risk statistical power. You risk never finishing enrollment.

The design types you don't see on ClinicalTrials.gov

The published substudies show the simple cases. A single-arm two-stage design for a combination in a molecularly defined population. A randomized comparison of combination versus monotherapy.

The substudies that never activated were often more ambitious. One design I worked on had three cohorts: two randomized and one single-arm. The randomized cohorts compared combination versus single-agent in two different disease contexts, with PFS as the primary endpoint. The single-arm cohort enrolled patients who had already progressed on the class of single agent being studied, testing whether the combination could rescue them. The one-sided alpha for the randomized cohorts was 0.10. For the single-arm cohort, it was 0.03%. That isn't a typo. When you have strong evidence that monotherapy doesn't work in the pretreated population, you can tolerate a much tighter alpha because the null response rate is already low.

The single-arm cohort also accepted crossover patients from other ComboMATCH substudies, not just from its own randomized arms. That's a design feature unique to platform trials. A patient who progresses on the control arm of one substudy can potentially be reassigned to a different substudy whose combination targets the same molecular alteration through a different mechanism. The statistical implication is that the pretreated cohort's enrollment depends on accrual patterns across the entire platform, not just within its own protocol.

This kind of cross-substudy dependency is what makes platform trial design genuinely different from standalone trial design. It's also what makes it fragile. The three-cohort substudy I described needed 150 patients across all cohorts. The accrual projection assumed a specific molecular alteration would be present in enough registered patients to fill all three cohorts within a reasonable timeframe. When the therapeutic landscape shifted and the relevant drug class became commercially available, the substudy lost its rationale before it could open.

What the grammar optimizes for

The ComboMATCH grammar isn't arbitrary. It reflects a set of values that were negotiated across five cooperative groups, the NCI's Biometric Research Program, and CTEP.

The one-sided 0.10 alpha for randomized substudies is deliberately permissive. These are signal-seeking studies, not registrational trials. A false positive here means investing resources in a confirmatory trial that ultimately fails. That's expensive, but it's recoverable. A false negative means abandoning a combination that might have worked. In rare biomarker-defined populations where alternative treatment options are limited, that's a harder loss. The error asymmetry is intentional.

The two-stage futility monitoring for single-arm substudies and the Wieand rule for randomized substudies both serve the same function: they protect the platform, not just the substudy. A substudy that continues to enroll despite clear futility consumes screening slots, biomarker testing resources, and clinical sites that could serve other substudies. In a platform trial, every patient enrolled on a failing substudy is a patient who might have been matched to a different combination.

The accrual kill switches serve this same protective function at a different timescale. Futility rules protect against ineffective treatments. Accrual rules protect against infeasible designs. Both are necessary because the platform has limited bandwidth and dozens of substudies competing for it.

What this means for the reader

If you read a ComboMATCH protocol and see a two-stage design with H₀ = 20%, H₁ = 40%, one-sided alpha of 6%, and 82% power, you're reading the output of a negotiation. The null hypothesis reflects prior evidence about single-agent activity and the committee's judgment about what response rate would be uninteresting. The alternative reflects clinical ambition constrained by feasibility. The alpha and power reflect the platform's tolerance for different kinds of errors, calibrated against the resource cost of each enrolled patient.

None of these numbers were derived from a textbook formula and written into the protocol. They emerged from a process that involved the study team, the platform's statistical review group, the molecular biomarker committee, the steering committee, and the NCI's Cancer Therapy Evaluation Program. Each body brought different priorities: scientific rigor, accrual feasibility, biomarker prevalence, drug supply logistics, regulatory expectations.

The final statistical design section of a ComboMATCH protocol reads like a clean set of specifications. It doesn't read like what it actually is: a compressed record of months of negotiation among competing constraints.

I don't think this is a problem. The negotiation is the design process working as intended. But it means that reading a protocol's statistical section as a derivation rather than a settlement understates the judgment embedded in every parameter.

Platform trials are often described as efficient. They are, in the sense that shared infrastructure reduces per-substudy overhead. But the efficiency is purchased through standardization, and standardization means that the grammar shapes the science at least as much as the science shapes the grammar.

That tradeoff is worth understanding if you're designing a substudy, reviewing one, or trying to interpret what a platform trial's results actually mean.

I was a statistician at SWOG Cancer Research Network from 2019 to 2022, where I worked on ComboMATCH substudies EAY191-S3 and S4. S3 is now closed to accrual. S4 never activated. A companion post on why most platform trial substudies never reach patients is forthcoming.

The Meric-Bernstam et al. design paper is available open-access at Clinical Cancer Research. The Wieand futility rule is described in Wieand, Schroeder, and O'Fallon, Statistics in Medicine (1994).

For consulting inquiries: maggie@zetyra.com

For more essays on statistical design and regulatory evidence, subscribe to the Evidence in the Wild newsletter.

Member discussion